In this blog post, we present our approach for using Deep Reinforcement Learning as an alternative to existing solutions for navigation in AAA video games. This work was also selected for a spotlight presentation at the Challenges of Real-World RL workshop at NeurIPS.

A crucial part of non-player characters (NPCs) in games is navigation, which allows them to move from one point to another on a map. The most popular approach for NPC navigation in the video game industry is to use a navigation mesh (NavMesh), which is a graph representation of the map whose nodes and edges indicate traversable areas. Unfortunately, complex navigation abilities that extend the character’s capacity for movement, e.g., grappling hooks, jetpacks, teleportation, jump pads, or double jumps, increase the complexity of the NavMesh, making it infeasible in many practical scenarios.

As an alternative, we propose to use Deep Reinforcement Learning (Deep RL) to learn how to navigate on 3D maps using any navigation abilities. We tested our approach in a popular game engine on complex 3D environments that are notably an order of magnitude larger than maps typically used in the Deep RL literature. We also included some recent results where we test our approach by running it directly in the engine of a AAA Ubisoft game called Hyper Scape. We find that our approach performs surprisingly well, achieving at least 90% success rate in point-to-point navigation on all tested scenarios.

Be sure to check out the paper for more details and the video appendix to see more of our trained agents in action. If you have any questions, feel free to email us directly.

Legend

Here’s a brief legend that will hopefully make everything easier to follow. The agent, represented by a blue cube, is tasked with navigating to the goal, represented by an orange puck.

The agent has access to the following action set and abilities:

![[LA Forge] Deep Reinforcement Learning for Navigation in AAA Video Games - legend white](http://staticctf.ubisoft.com/J3yJr34U2pZ2Ieem48Dwy9uqj5PNUQTn/72jJGjRuJ59aF2zyXjus8e/1133cf984cdab195f330232fb4c2a2d4/-La_Forge-legend_white.gif)

For those unfamiliar, double jumps allow the agent to interrupt its first jump with another jump midair. Moreover, jump pads propel the agent very high when stepped on.

Building a NavMesh in practice

![[LA Forge] Deep Reinforcement Learning for Navigation in AAA Video Games - schema nav](http://staticctf.ubisoft.com/J3yJr34U2pZ2Ieem48Dwy9uqj5PNUQTn/36UymRdtVAobjRpJCmLzFH/99f08e377954ccbaa1e4ae10b6b81412/-La_Forge-_schema_nav.gif)

Figure 1: Basics of classical navigation on a schematic map

Figure 1 describes the basics of classical navigation on a schematic map. A NavMesh is generated from the raw geometry of the world as a set of independently traversable areas. Once the graph is formed, a path is found between any two points on the mesh using classical path finding algorithms such as Dijkstra’s algorithm or A*. The path is then smoothed and the agent follows that path.

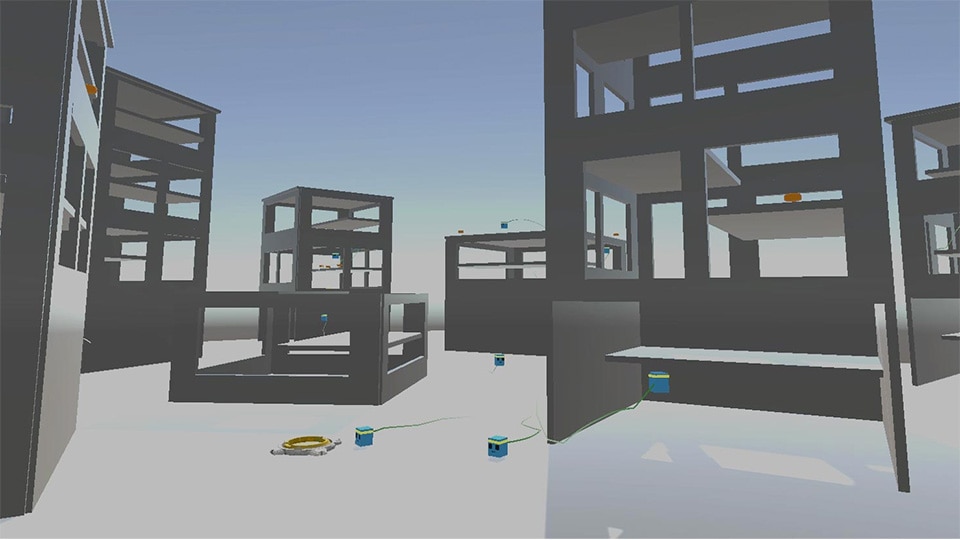

We constructed a toy map to highlight some of the limitations of a NavMesh (Figure 2).

The map consists of many buildings that each have several floors the agent can navigate to. It also has two jump pads. We note that the roofs are designed to only be accessible via the jump pads or a double jump from the top floor.

![[LA Forge] Deep Reinforcement Learning for Navigation in AAA Video Games - toy map](http://staticctf.ubisoft.com/J3yJr34U2pZ2Ieem48Dwy9uqj5PNUQTn/1yNK5vv0DAo4SQZpV0yyDi/7c9b52ce977e141cd10c163f849cd362/-La_Forge-_toymap_with_legend.png)

Figure 2: Toy map

Figure 3 shows the NavMesh for this map (in blue) and highlights some of the problems it would have in finding a path between the two roofs. First, jump pads are not included in the NavMesh, since geometrically speaking they are not connected to all the areas they allow the agent to reach (e.g., the roof). Moreover, separate floors are also disconnected from one another in the NavMesh. Therefore, to use the jump pads or to be able to navigate from floor to floor via jumps and double jumps, additional links connecting these areas are required.

![[LA Forge] Deep Reinforcement Learning for Navigation in AAA Video Games - Figure 3: Toy map with NavMesh](http://staticctf.ubisoft.com/J3yJr34U2pZ2Ieem48Dwy9uqj5PNUQTn/3hFeXjru4356Wj8tQdhCPS/0e5d9195911042f361e7955b937ad867/-La_Forge-_toymap_with_legend.png)

Figure 3: Toy map with NavMesh (in blue)

Additional links scale poorly

You can find below a short clip of us manually adding links stemming from a single jump pad in order to connect it to other areas like floors and roofs. As you can see, even at 20 times the speed, this process is very labor intensive. We note that this is all for a single jump pad on a single small map.

![[LA Forge] Deep Reinforcement Learning for Navigation in AAA Video Games - Manual addition of links](http://staticctf.ubisoft.com/J3yJr34U2pZ2Ieem48Dwy9uqj5PNUQTn/4SBJBOFtEWYp0QeXYYWnLu/0784d487f3804c139a96607f78d692bd/-La_Forge-_additional_links_x20.gif)

Figure 4: Manual addition of links

In addition to being labor-intensive, adding links dramatically expands the graph, which increases the space as well as the time complexity of finding the shortest path. Using a NavMesh becomes intractable in many practical situations, and we thus must compromise between navigation cost and quality. This is accomplished in practice by either removing some of the navigation abilities or by pruning most of the links.

Main Takeaway

NavMesh-based navigation can be achieved by adding links, but quickly becomes intractable in large worlds with complex navigation abilities like double jumps, jump pads, jetpacks, and teleports.

Deep RL for Navigation

We now describe our alternative approach to navigation, based on deep RL. At a high–level, the two ingredients that our method needs are the map, which notably does not have a NavMesh, and the navigation abilities. We use model free deep RL to train a neural network to perform point–to–point navigation on a given map using movement abilities.

Our neural network architecture is as follows. As inputs, the agent receives a local view of the world, represented in terms of a 2D depth map, a 3D occupancy map, its current location and the current goal location, as well as some other low-level features such as its current speed and acceleration. These inputs are then passed through feed forward and convolutional neural networks, as well as an LSTM to handle the potential partial observability. The output of the network is an embedding passed to both the policy and the value networks. We train these networks using Soft-Actor Critic.

![[LA Forge] Deep Reinforcement Learning for Navigation in AAA Video Games - Neural network architecture](http://staticctf.ubisoft.com/J3yJr34U2pZ2Ieem48Dwy9uqj5PNUQTn/5uR0uEdOZiY16aqf8KH2dh/5b3f0b5e6a6bb21f2e6385b0357089c2/-La_Forge-_architecture.jpeg)

Figure 5: Neural network architecture

Toy map

Here’s a short video that highlights our now trained deep RL system on our toy map of 100 meters by 100 meters. After training, our deep RL agent achieves a 100% success rate in point-to-point navigation on this map. We recall that there’s no NavMesh, path requests, or anything of the sort. A neural network takes in the inputs from the world and outputs navigation actions.

Scaling up the map

We also test our system on maps that are more representative of the kind of environments one would encounter in many AAA games. Here is a top-down view of our realistic environment of 300 by 300 meters, with tons of jump pads, and obstacles.

![[LA Forge] Deep Reinforcement Learning for Navigation in AAA Video Games - Realistic environment](http://staticctf.ubisoft.com/J3yJr34U2pZ2Ieem48Dwy9uqj5PNUQTn/QETflLOaibJuGWQbIwmjt/853f60344c35baffabdf20bc2c81a258/-La_Forge-_big_map_6_smaller-2048x845.jpeg)

Figure 6: Realistic environment

Notably the exact same solution achieves a 93% success rate in point-to-point navigation. Note that this does not necessarily mean that the agent fails the other 7% of the time as there may not exist a valid path.

Hyper Scape map

Finally, we push our Deep RL system to the absolute limit by testing it inside Hyper Scape, a recently released AAA video game from Ubisoft. The agent is tasked with solving several maps which scale all the way up to 1 kilometer by 1 kilometer. Here is a short video highlighting our results in Hyper Scape. Note that as these results are hot off the press, they weren’t included in our original paper.

In this work, we showed that Deep Reinforcement Learning can be an alternative to the NavMesh for navigation in complicated 3D maps, such as the ones found in AAA video games. Unlike the NavMesh, the Deep RL system is able to handle navigation actions without the need to manually specify each individual link. We find that our approach performs surprisingly well on all tested scenarios. As future work, we plan to test how well this approach can generalize to new goal locations and new maps that it has not seen during training.

Authors

ELOI ALONSO, Ubisoft La Forge, Canada

MAXIM PETER, Ubisoft La Forge, Canada

DAVID GOUMARD, Ubisoft La Forge, Canada

JOSHUA ROMOFF, Ubisoft La Forge, Canada